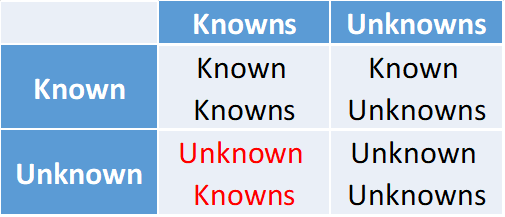

Rumsfeld's missing quadrant

Unknown Knowns are things that we don’t know we know – available evidence that we fail to take into account because a blind spot prevents us from seeing it.

Othello and Bob: two unlikely bedfellows in the grip of the Prior indifference fallacy.

Othello badly misjudged the probability of his beloved wife’s infidelity, and paid the ultimate price. Bob made the same mistake with the probability of carrying a rare virus, and had the scare of his life.

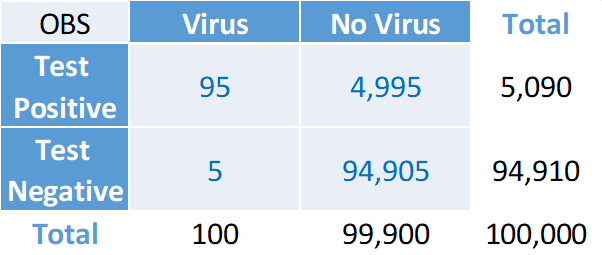

Let’s look at their massive blunder from a different angle. Bob panicked after getting a positive result from a 95% accurate test. What does it mean? Let’s again examine a 2x2 Observation table, where we imagine the test has been carried out on 100,000 people. The table provides hard evidence on the test’s accuracy as a result of a controlled, replicable experiment grounded on empirical frequencies – the Gold Standard of Probability Theory. Here is what the numbers would look like:

Let’s look at the main features of the data.

First, the virus is rare: it affects 100 out of 100,000 people: BR=0.1%

Second, the test is 95% symmetrically accurate: it correctly detects 95 of the 100 infected individuals: TPR=95%. And it correctly detects 94,905 of the 99,900 healthy ones: TNR=95%.

Third, it follows that 5 of the 100 infected individuals are wrongly diagnosed as healthy: FNR=5%. And 4,995 of the 99,900 healthy individuals are wrongly diagnosed as infected: FPR=5%.

Putting it all together, here is the key step: out of a total of 5,090 individuals who, like Bob, test positive, only 95 are True Positives, whereas 4,995 – the vast majority – are False Positives. Therefore, the posterior probability of infection after a positive test result is 95/5090=1.9%.

This is a 19-fold increase from the 0.1% Base Rate, but it still falls very short of supporting the hypothesis of infection. As we know, for the test to be supportive, it needs to be much more accurate: TPR>1-BR=99.9% – a near conclusive test.

Bob missed this last step. Blinded by the evidence of a highly accurate test, he ignored the Base Rate, fell prey to Prior indifference and Perfect ignorance – I don’t know whether I have the virus or not – and committed the Inverse fallacy, thus mistaking Accuracy for Support and massively overestimating the probability of infection.

Othello made the same mistake. In his case there was no hard evidence: Othello’s probabilities were entirely subjective, based on soft evidence and grounded on beliefs, confidence, and trust. We assumed BR=1% and asymmetric evidence with TPR=50% and TNR=95% (FPR=5%), resulting in a higher but still widely unsupportive posterior probability of betrayal of about 9%. But, blinded by the infamous handkerchief evidence planted by the wily Iago, Othello also drifted into Prior indifference – I think my wife be honest, and think she is not – thereby inflating the probability of betrayal all the way to 91%.

Two massive blunders indeed. Triggered by accurate but inconclusive evidence. As Tom the statistician told his friend, in the absence of a test Bob would have naturally enquired about the frequency of the virus. Likewise, without Iago’s plot, Othello would have kept his faith in Desdemona’s honesty. What drives them away from a sound prior is their craving for certainty.

Othello and Bob are both very uncomfortable with uncertainty. They want proof: conclusive evidence that would enable them to know the Truth regardless of priors, thus bringing an end to their agony – one way or the other. So when accurate evidence appears, their priors disappear in the background: they become unknown knowns.

As former US Secretary of Defence Donald Rumsfeld famously said:1

There are known knowns; there are things we know we know. We also know there are known unknowns; that is to say we know there are some things we do not know. But there are also unknown unknowns – the ones we don’t know we don’t know.

But there is a fourth quadrant in Rumsfeld’s Matrix:

Unknown Knowns are things that we don’t know we know – available evidence that we fail to take into account because a blind spot prevents us from seeing it. Prior indifference renders the Base Rate an Unknown Known.

Remember BR=50% does not necessarily mean that we believe the prior probability of the hypothesis is 50%. It may just mean that we believe we know nothing at all – Perfect ignorance. Do I have the virus? Is my wife unfaithful? If the answer is: I have no idea, we have fallen prey to the Prior indifference fallacy.

Why is it a fallacy? Because it is hardly ever true that we have no idea. Most times our priors contain plenty of background evidence that we wrongly ignore.

Bob hears on television that forty people have recently died from a lethal virus. He is not a Martian catapulted on earth with no knowledge of earthly matters: although he is worried, he can easily find out that the virus is rare: it hits about one in a thousand.

But hang on. 1/1000 is the probability of extracting an infected person from the general population. This is not what Bob is after: he wants to know the probability that he has the virus. This could be properly assessed only by comparing himself to others who are more like him: people who share the same, or at least a comparable probability of getting infected. But what does comparable mean? For instance, future genetic research may reveal a link between the virus and a particular gene, which is found in, say, only 2% of the population. If a person does not carry that gene, he will certainly not get the virus. But if he has it, the probability of getting it is 5% (apologies to virologists: it is a stylised exemple). Thanks to this discovery, we would find that the 1/1000 population Base Rate is really the product of 2% times 5%. So, in a sample of 100,000 people, 100 carry the gene and got the virus, 1,900 carry the gene but did not get it, and the rest have no gene and therefore no virus. Or perhaps the gene is present in only 1% of the population, and those who carry it have a 10% probability of getting the virus. Or maybe it is such a rare gene that has a 0.5% frequency, and the unlucky ones have a 20% chance of being affected. And why not go all the way: only one in a thousand have the gene and therefore, if infected, are predestined to certain death.

The Base Rate and, with it, the posterior probability of the hypothesis depend on the definition of the relevant population. You don’t try the virus test on people who cannot get the virus, just as you don’t try shampoo on bald men. What is the appropriate Base Rate? Given the current state of knowledge, it is 1/1000. But it could be completely different, depending on the definition of the appropriate reference class. We can think of the reference class as an image of the state of knowledge about the virus. The more we know, the smaller the reference class. Indeed, knowledge can be defined as a progressive narrowing down of possibilities (remember Sherlock). The smaller the reference class, the higher the Base Rate for individuals belonging to that class. In the limit, knowledge about the virus could become as complete as to allow us to narrow down the population to precisely those one-in-a-thousand individuals who, if infected, will certainly get the virus.

This uncertainty about the appropriate reference class is distinctly Knightian. Given current knowledge, the Base Rate is 1/1000, but with increased knowledge it could be anywhere between 0 and 1 – like extracting from an urn with white and black balls in unknown proportions. It is in this state of uncertainty, and with the aim of finding the Truth, that Bob takes the test. And after the test’s response he no longer sees himself as a comparable member of the general population. The 1/1000 Base Rate – so clear and consequential until then – is Swept Under The Carpet (SUTC):2 it becomes an Unknown Known. Bob no longer knows which reference class he belongs to, hence he cannot define the relevant Base Rate. And since an undefinable Base Rate could be anywhere between 0 and 1, he picks the neutral midpoint: he becomes prior indifferent. He simply thinks: I may or may not have the virus, attach an equal chance to the two possibilities, and lets the test decide. And if the test says he has the virus, he believes it. The urge to resolve this uncomfortable state of Knightian uncertainty is what consigns Bob in the hands of the test response.

Othello is lost in the same conundrum. True, Desdemona is the one and only, but the prior probability that she is an adulterer cannot be left to wishful thinking: it should be grounded on objective data. But this is easier said than done. Should Othello look for the percentage of female adulterers in Venice? This would give him the probability that a randomly selected Venetian woman is an adulterer. But is this what he is after? Surely Desdemona is not an average Venetian. Should he limit the sample to women who are more like Desdemona? But what does that mean? Choosing an appropriate Base Rate is such a difficult and ultimately arbitrary decision that one is tempted to admit defeat, declare total ignorance and assume Prior indifference: “Look, I have no idea if Desdemona is betraying me or not. She may, she may be not, I simply don’t know.” Once Othello, nudged by the wily Iago, falls prey to the Prior Indifference Fallacy, he’s done: the handkerchief in Cassio’s lodgings gives him plenty of evidence of betrayal.

Seen in this light, Prior indifference is a distortion of Bayesian updating. While a correct update takes BR and increases or decreases it according to the likelihood of new evidence, Prior indifference triggers an inadvertent shift of the Base Rate to 50% before the update takes place. As a result, the update builds on Knightian uncertainty and Perfect ignorance, rather than on prior beliefs.

With meaningful, momentous and sometimes tragic consequences.

J. Good, Good Thinking, p.23