Cromwell's Rule

I beseech you, in the bowels of Christ, think it possible that you may be mistaken.

I hope you have now got a good grip on Bayes formula. I encourage you to write it down on a spreadsheet and play around with it: tweak the three inputs and watch how the posterior probability responds.

Be liberal in your experiments. The three parameters are independent, so as long as you stay between 0 and 1, you are free to explore all sorts of combinations.

We have already started in the previous post. We shall do more here, where we begin by deriving Bayes Theorem from its most basic elements: the observations.

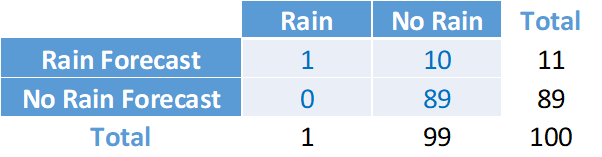

Let’s use again the rain example. Assume we take 100 observations and place them in the four quadrants of the following 2x2 table:

The two columns represent the possible states of hypothesis H: True – Rain tomorrow; False – No Rain tomorrow. The two rows represent the possible states of Evidence E: Positive – The weather report forecasts Rain; Negative – The weather report forecasts No Rain. Starting from the first quadrant on the top left, and moving clockwise using the numbers assumed in the example, we place the 100 observations according to their joint outcomes:

True Positives: It rained and the report rightly forecast it would.

False Positives: It did not rain but the report wrongly forecast it would.

True Negatives: It did not rain and the report rightly forecast it would not.

False Negatives: It rained but the report wrongly forecast it would not.

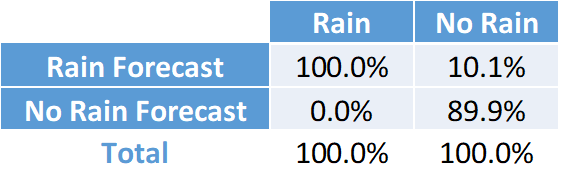

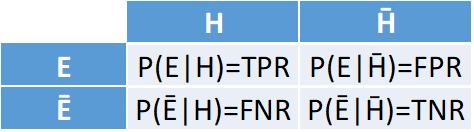

We can have as many observations as we want. We then normalise the result by dividing the four numbers by their row totals, thus deriving the four conditional probabilities of the Evidence given the Hypothesis:

where:

P(E|H)=1/1=100%. We call this the True Positive Rate (TPR)

P(E|H̄)=10/99=10.1%. We call this the False Positive Rate (FPR)

P(Ē|H̄)=89/99=89.9%. We call this the True Negative Rate (TNR)

P(Ē|H)=0/1=0%. We call this the False Negative Rate (FNR)

In general, we have:

We call them Likelihoods. Notice that, by definition, TPR=1-FNR and FPR=1-TNR. But there is no direct relationship between TPR and FPR – that’s why you can freely experiment with them.

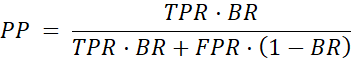

One last definition. Notice that the prior probability P(H) is equal to the total number of observations in the H column over the total number of observations in the table: 1/100=1% in our example. P(H) is the probability of H regardless of evidence E. It is therefore also known as the Base Rate (BR).

Putting all together, and denoting the Posterior Probability P(H|E) as PP, we can rewrite Bayes formula as:

Here we are – we got to the final destination. I hope this version of the formula does not look as foreboding as the original.

We are now ready to start looking at the many remarkable features of Bayes Theorem and to analyse its crucial, fruitful and far-reaching properties.

First: Like all probabilities, PP ranges from 0 to 1. It equals 0 if BR=0 or TPR=0. It equals 1 if BR=1 or FPR=0. (Mathophobes: it’s easy to see – just a little effort please).

Think about the implications. Here is one of the most consequential properties of Bayes Theorem:

If BR=0 then PP=0, regardless of TPR and FPR.

If BR=1 then PP=1, regardless of TPR and FPR.

Consider what this means in our examples. If you are a priori certain that it will not rain tomorrow, or that Nvidia is not overpriced, then no amount of evidence – no matter how strong, conclusive, irrefutable – can ever change your mind. At the opposite extreme, if you are a priori certain that it will rain, or that Nvidia is overpriced, there is no way that any kind of evidence will ever move you away from that conviction.

We call this Faith: a prior certainty that no amount of evidence can alter.

Faith renders beliefs immune to evidence, thus disabling Bayes Theorem. For the theorem to have any effect, the Base Rate cannot be trapped at the extremes of Faith. It must differ – if only by a small amount – from 0 and 1.

Hence a good Bayesian adheres to Cromwell’s Rule:

I beseech you, in the bowels of Christ, think it possible that you may be mistaken.

A good Bayesian shuns prior certainty – impermeable to evidence – while at the same time seeking posterior certainty, which is entirely dependent on evidence.

As we know, this can be done in two ways:

Finding a piece of conclusive evidence that proves that the hypothesis is certainly true, or certainly false.

Gathering a large amount of confirming or disconfirming evidence that, though individually inconclusive, bears enough collective weight against the alternative to pull us towards virtual certainty: not exactly 1 or 0, but close enough to make us certain beyond reasonable doubt that the hypothesis is true or false.

There are four types of conclusive evidence, two related to positive evidence and two to negative evidence. We have just seen the first two:

If TPR=0, then PP=0, regardless of BR and FPR.

If FPR=0, then PP=1, regardless of BR and TPR.

We will examine them in another post.